The Cost of Building Cloud Agents

Enterprises are converging on cloud agents as the future of software engineering — and many are concluding they should build their own. Posts like Stripe's, detailing how they built a homegrown cloud agent, make the path look achievable.

We've spent over two years building cloud agent infrastructure for Devin at Cognition. That experience has taught us how deep the technical investment actually goes — and has left us convinced that for most enterprises, building in-house is the wrong call. Not because it can’t be done, but because by the time you finish, your competitors will be quarters ahead in changing how they build software.

The Challenges of Building Cloud Agent Infrastructure

Stripe is the most referenced example — and for good reason. But Stripe didn't start where most enterprises do. They had already invested heavily in devboxes: full cloud development environments running on dedicated EC2 instances. By the time they added an agent harness on top, many of the hardest infrastructure problems were already solved.

Most enterprises don't have that foundation. Few companies have spent years building the necessary infrastructure for cloud agents. Instead, for most, the natural starting point is containerizing a CLI agent and moving execution to the cloud, which introduces a different set of problems.

Security challenges with containerized agents

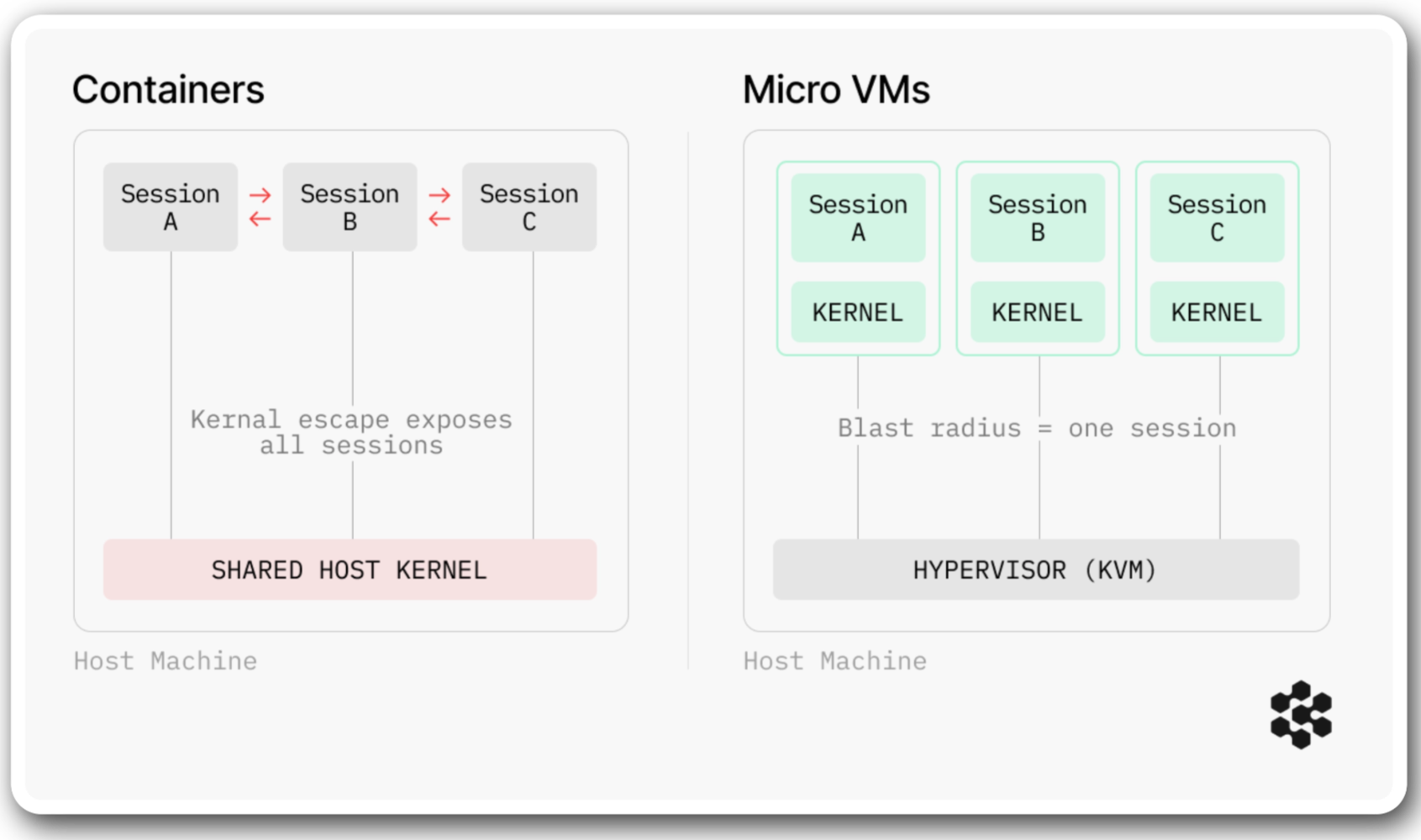

A container is a lightweight runtime that shares the host machine's kernel. That shared kernel creates a security problem specific to agents: agents generate their own code, run arbitrary commands, and probe the environment in unpredictable ways. If an agent triggers a kernel-level escape, one compromised session can access every other container's filesystems, credentials, and network connections.

The industry consensus for running untrusted code is VM-level isolation — each workload gets its own kernel, with no shared attack surface. This is the approach we took with Devin, and it's the direction the broader infrastructure community has been moving toward. But standing up VM-based isolation for agent workloads is a significant infrastructure project in itself.

The industry consensus for running untrusted code is VM-level isolation — each workload gets its own kernel, with no shared attack surface. This is the approach we took with Devin, and it's the direction the broader infrastructure community has been moving toward. But standing up VM-based isolation for agent workloads is a significant infrastructure project in itself.

Limitations to agent autonomy

Even for teams that solve the security problem, a deeper capability gap remains.

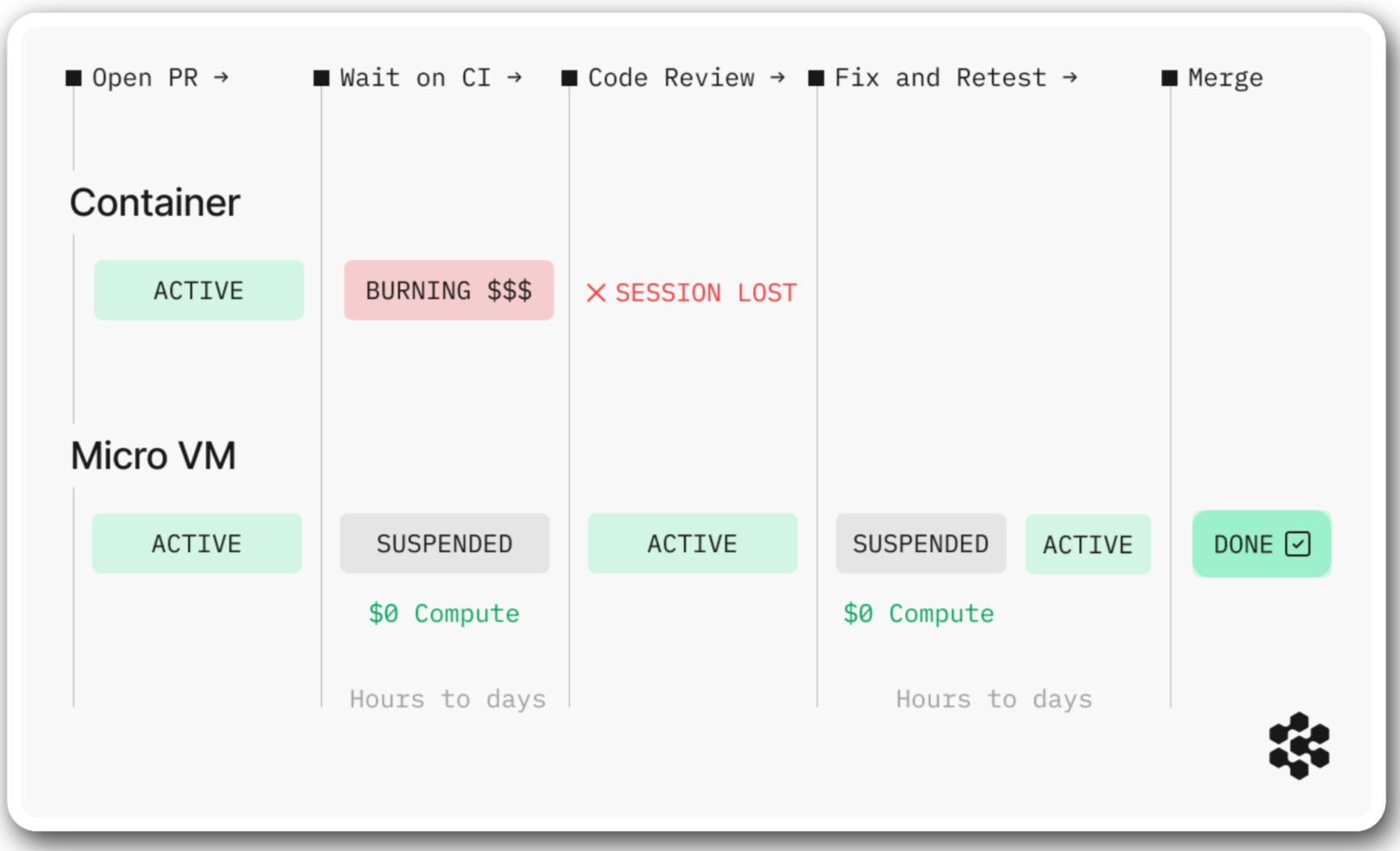

Real engineering work is asynchronous. An agent opens a PR, waits on CI, responds to code review, reruns tests, pushes a follow-up commit. Between each step, there are gaps — minutes, hours, sometimes days — where the agent must preserve its full working state. For bounded work like dependency upgrades, a single-pass agent that completes and exits is enough. But work that spans the async gaps of the SDLC remains out of reach.

An agent that handles these async breaks needs three capabilities: fast startup with a fully provisioned environment, the ability to suspend and resume without losing state, and the ability to reconcile events that occurred during suspension like CI results or review comments.

Containers cannot provide these reliably. There is no way to snapshot an individual container's full state, shut down compute, and restore it later. A containerized agent can only survive async breaks by burning compute to stay alive — and if the container is rescheduled, times out, or crashes, the session is lost.

Enterprise operations

Deploying agents across an engineering organization requires additional infrastructure for orchestration, governance, and system access.

- Orchestration: Each agent session is unique — tied to a specific task and engineer's permissions. Running hundreds concurrently requires provisioning the right environment for each one, routing sessions correctly, predicting demand to keep warm VM pools ready, and keeping every provisioned environment current as codebases change daily.

- Governance: Each session must inherit the dispatching engineer's permissions across every system it touches, with every action recorded in a tamper-evident audit trail. Building and maintaining identity chaining, access scoping, and audit logging at enterprise scale is its own engineering project that requires ongoing maintenance.

- Integrations: An agent is only as useful as the systems it can reach — CI, monitoring, package registries, documentation, source control. Each has its own authentication model, permission scoping, and maintenance burden. Stripe has an internal MCP server with over 400 tools to keep their agents connected. That is the scale of investment this layer demands.

Changing How Software Gets Built

The infrastructure is only the first phase. The second is transforming how your engineering organization actually works with agents. And that work cannot start until agents are deployed.

Engineers need to develop fluency in a fundamentally new way of working: delegating tasks to a fleet of agents and reviewing output across dozens of concurrent sessions. These are new skills, and they take practice. At the same time, the organization has to evolve: planning around agent capacity, adapting review workflows, rethinking team sizes when a single engineer directing parallel agents produces what a small team used to. Very little of this can be designed in advance — it emerges through months of operating with agents on real projects.

Our FDEs accelerate this change management process. Because they bring playbooks from dozens of prior Fortune 500 deployments, the recurring challenges of how to allocate organizational resources, navigating security reviews, and scaling adoption across the org don't have to be solved from scratch.

What we've seen emerge across our deployments is that companies who do this work arrive at a fundamentally different engineering operating model. Engineers spend less time implementing and more time defining goals, setting intent, and managing execution. The constraint moves from "how will we build this?" to "what should we build?"

Itaú, the largest private bank in Latin America, is eleven months into this shift with nearly 17,000 engineers on our platform — completing monolithic migrations 5 to 6x faster, auto-remediating 70% of static-analysis security vulnerabilities, and reducing a major compliance project from years to months.

Every year spent building infrastructure is a year you are not deploying agents, not going through this transformation, and not compounding the returns. That is the real cost of building.

What We've Built

Over the past two years, we've solved these technical challenges so enterprises can skip the infrastructure build and start deploying agents immediately.

Instead of containers, Devin runs each session in its own microVM — a full machine with a dedicated Linux kernel, hardware-backed KVM isolation, and no shared kernel. There is no path from one session to another's filesystems, credentials, or network connections.

Because each session is its own machine, agents can run a full browser, desktop applications, and arbitrary tool stacks. This architecture also enables us to snapshot, suspend, and restore full machine state at the hypervisor level — shutting down compute when idle and restoring everything on resume, including events that occurred during suspension.

On top of this, our infra team, after over a year of specialized hypervisor engineering, built a custom orchestration layer to manage thousands of concurrent VMs — predicting demand, keeping warm pools ready, recovering crashed sessions, and tearing down completed ones.

We built Devin because we believe cloud agents will fundamentally change how software is built. That shift is underway. If you're evaluating whether to build or buy — reach out here.